When schools and universities moved faster toward blended and hybrid models after the pandemic, many didn’t stop to ask one simple question: Are our assessments still matching what we’re teaching?

It’s easy to assume that if you’re using video lectures, discussion boards, and in-person labs, your tests and assignments must be fine. But that’s not true. Assessment alignment isn’t automatic. It’s something you have to build - deliberately - across different learning environments.

What Does Assessment Alignment Even Mean?

Assessment alignment means your tests, assignments, and grading methods actually measure the learning outcomes you set out to achieve. If your goal is for students to apply critical thinking to real-world problems, but your final exam is all multiple-choice definitions, you’ve got a mismatch.

In traditional classrooms, teachers had more control over pacing and environment. Now, in blended models - where students alternate between online and in-person sessions - and hybrid models - where some students are remote while others are on campus - the learning experience is fragmented. If assessments don’t reflect that, they fail.

For example, a student in Auckland taking a biology course might watch a lab demonstration online, then come in for a hands-on session. If the assessment only tests their recall of the video, they’re being graded on passive learning, not the skill they actually practiced.

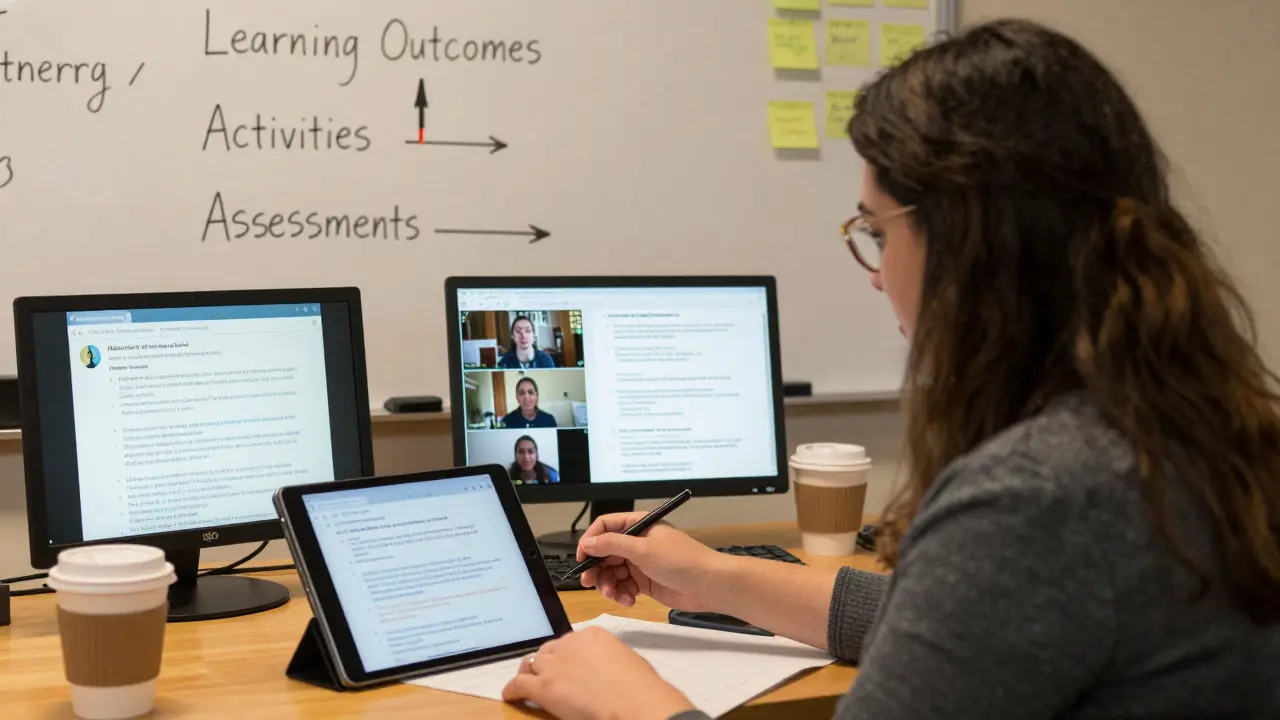

The Three Pillars of Alignment

Good alignment doesn’t happen by accident. It needs three things working together:

- Learning outcomes - Clear, measurable goals for what students should know or be able to do.

- Learning activities - The tasks, discussions, labs, or projects that help students reach those outcomes.

- Assessments - The way you check whether students actually learned it.

If one of these is out of sync, the whole system breaks. In blended settings, this happens often. Teachers design rich online discussions but still use a paper-based final exam. Or they assign group projects in person but assess individual performance with a quiz.

Here’s a real case: A university in Wellington redesigned its history course to be hybrid. Students watched lectures online, participated in asynchronous forums, and met once a week for debates. But the final assessment? A 50-question multiple-choice test based only on the video content. Students who engaged deeply in discussions scored poorly. The assessment didn’t reflect the actual learning.

How Blended and Hybrid Models Change the Game

Blended learning usually means students do some work online and some in person - but the same group does both. Hybrid learning often means some students are remote, others are physical, and the experience is split.

In blended models, alignment means ensuring online activities lead directly to in-person assessments. For example, if students complete a simulation on a learning platform, the next in-person class should include a live debrief or application task that builds on it. The assessment then checks whether they understood the simulation - not just whether they clicked through it.

In hybrid models, the challenge is even bigger. You can’t assess remote students differently than in-person ones. But you also can’t assume they had the same access to tools, time, or support. So assessments must be designed to be fair across both environments.

Take a writing course. In a hybrid setting, some students attend workshops in person, others join via Zoom. If the final essay is graded on “class participation,” you’re penalizing remote students. Instead, the assessment should focus on the final product - the essay - and use clear rubrics that measure the same skills regardless of delivery mode.

Practical Strategies for Better Alignment

You don’t need fancy tech to fix this. You need clarity and consistency.

- Start with outcomes - Write them in plain language: “Students will be able to analyze data from a survey” not “Understand research methods.”

- Match activities to outcomes - If the outcome is “explain cause and effect,” then discussion prompts, case studies, or peer feedback sessions should require that skill.

- Design assessments that mirror the activities - If students spent weeks doing peer reviews, the final assessment should include a peer review component.

- Use rubrics - Rubrics make grading fair and transparent. They also help students know what’s expected. A good rubric for a blended course might include categories like “quality of online contributions,” “depth of in-person analysis,” and “application of concepts in real scenarios.”

- Test your assessments - Try them out on a small group before rolling them out. Ask: “Does this actually show what they learned?”

One teacher in Christchurch started using short video reflections after every online module. Students recorded 90-second clips explaining one thing they learned and how it connected to their lives. The final assessment? A 5-minute video essay. No written exam. No quiz. The results? Students who struggled with traditional testing thrived. Their understanding was clearer, deeper, and more personal.

Common Mistakes to Avoid

Even experienced educators fall into traps.

- Using the same old tests - Just because you used a multiple-choice exam in 2019 doesn’t mean it works now.

- Assuming online = easier - Online tasks often require more self-discipline. If you don’t assess that, you’re missing half the learning.

- Ignoring equity - Not all students have quiet spaces, fast internet, or time to participate in live sessions. Assessments that require real-time participation can exclude them.

- Letting tech drive assessment - Just because your LMS has a quiz tool doesn’t mean you should use it for everything. Tools serve goals - not the other way around.

Another problem: assessment overload. In blended models, teachers often add assessments for every online activity - forums, quizzes, polls, reflections. That’s not alignment. That’s busywork. The goal isn’t to collect more data. It’s to know if learning happened.

What Success Looks Like

When alignment works, you see it in student work. They connect ideas across platforms. They talk about how an online simulation helped them understand a concept they later tested in a lab. They can explain their reasoning, not just regurgitate answers.

One nursing program in Auckland switched from written exams to video case studies. Students recorded themselves assessing a simulated patient, then submitted it. Instructors graded based on communication, decision-making, and clinical reasoning - not memorized facts. Pass rates went up. Student confidence soared. And dropout rates dropped.

Alignment isn’t about making things harder. It’s about making them meaningful.

Getting Started: A Simple Checklist

Here’s a quick way to audit your own course:

- List your top 3 learning outcomes.

- List your main learning activities (online and in-person).

- List your assessments.

- For each outcome, ask: Does each activity help students reach it? Does each assessment measure it?

- If the answer is no to any, redesign.

You don’t need to overhaul everything. Start with one course. Fix one assessment. See the difference.

Assessment alignment isn’t a one-time task. It’s a habit. Check it every semester. Talk to students. Ask them: “Did this test really show what you learned?” Their answers will tell you more than any grade ever could.

Comments

Tia Muzdalifah

i just finished grading finals in my hybrid bio class and wow. we had students doing 90-second video recaps after each module and then a final video essay. the ones who actually did the vids? they could explain the science like it was a story. the ones who just did the quiz? barely remembered what a cell was. this is real.

also, no one cares about multiple choice anymore. we’re not training robots.

Zoe Hill

i just wanna say thank you for writing this. i’ve been trying to explain this to my dept chair for 2 years and they keep saying "but we’ve always done it this way." we switched to rubrics last semester and student engagement went up 40%. not because it was easier, but because they finally felt like their learning mattered.

Albert Navat

the real issue here is pedagogical inertia. we’re still operating under a transmission model of education-where knowledge is poured into passive recipients-when the data clearly shows that constructivist, scaffolded, multimodal assessment is the only thing that sticks. if you’re not measuring higher-order thinking through authentic tasks, you’re not teaching-you’re just administering content. period.

King Medoo

this is why education is broken. 🤦♂️ we let tech companies dictate how we assess learning. LMS quizzes? please. students aren’t learning-they’re clicking. i had a student last term who got 100% on every online quiz but couldn’t explain why photosynthesis matters in real life. we’re grading performance, not understanding. and we wonder why kids hate school.

it’s not about more data. it’s about deeper meaning.

Rae Blackburn

this is all just a distraction. the real problem? standardized testing. the feds force us to use bubble sheets. we have no choice. your "video essays"? cute. but your district will still get rated on SAT scores. this whole article is a luxury for rich schools.

we teach in trailer classrooms with 35 kids and no internet. don’t tell me to "redesign assessments" when i’m just trying to keep them alive.

Christina Kooiman

I cannot believe how many teachers are still using outdated methods. I mean, seriously? Multiple choice? In 2025? It’s embarrassing. If your students can’t articulate their reasoning, then they haven’t learned anything. I had a colleague who insisted on using Scantrons for her AP Literature class. She said, "It’s efficient." Efficient? Efficient for what? For grading? Not for learning.

And don’t even get me started on the idea that "online means easier." My students who struggled with in-class participation were thriving in discussion boards because they had time to think. But then we gave them a timed essay and they collapsed. That’s not a failure of the student-that’s a failure of the assessment.

Stephanie Serblowski

ok but can we talk about how we’re all pretending equity isn’t a factor? 🙃 remote students don’t have quiet spaces. some don’t have stable wifi. some are working 30 hours a week to pay rent. and we’re grading them on "participation in live discussions"?

the video reflections? genius. the rubric? perfect. but the moment you say "real-time engagement," you’ve already lost half your class.

also-i love that you mentioned Christchurch. that’s my hometown. we did this exact thing last year. students who used to drop out? now they’re presenting at education conferences. small wins.

Renea Maxima

alignment? what a buzzword. you’re all just chasing metrics. who says learning outcomes should be measurable? what if learning is messy? what if it’s nonlinear? what if some students learn by not doing, by observing, by sitting quietly while the world spins?

you’re trying to quantify the unquantifiable. and in doing so, you’re erasing the soul of education. the goal isn’t to "match" assessments to activities. the goal is to let students become who they are. not who your rubric says they should be.

Jeremy Chick

this is why i hate academia. you all overthink everything. just give them a project. let them build something. let them fail. then let them try again. that’s how you learn.

i taught a hybrid coding class last semester. no exams. no quizzes. just build a thing. if it works? you pass. if it doesn’t? you fix it. 90% of them ended up with portfolios. 10% dropped out. who cares? the 90% are now working. the others? probably still in undergrad.

stop making it so complicated.

Sagar Malik

the entire paradigm of assessment is rooted in western epistemological hegemony. your rubrics, your outcomes, your "authentic tasks"-these are all colonial constructs designed to normalize a particular mode of cognition. in my village in Punjab, knowledge is transmitted through oral tradition, communal reflection, and embodied practice. your video essays? your LMS? these are tools of cultural erasure.

we must deconstruct the assessment-industrial complex.

also, typo: "assessmnet" in paragraph 3. fix it.

Seraphina Nero

thank you for this. i’m a first-year teacher and i was so overwhelmed. i thought i had to do everything perfectly. this reminded me that small changes matter. i changed one assignment last week-replaced the essay with a 2-minute voice memo explaining their biggest takeaway. the responses? so honest. so human.

one kid said: "i didn’t know i could understand this until i heard myself say it."

that’s the goal.