Running an online community isn’t just about posting content. It’s about keeping the space safe, respectful, and useful. That’s where moderators come in. Whether you’re managing a course forum, a student study group, or a professional learning network, your moderator playbook needs clear policies, smart escalation steps, and the right tools. Without them, even the best-intentioned community can turn toxic, quiet, or chaotic.

Start with Clear Policies

Most moderation problems start because people don’t know the rules. A vague "be nice" guideline doesn’t cut it. You need specific, written policies that answer the obvious questions before they’re asked.

For example:

- What counts as harassment? (Is calling someone "lazy" in a discussion thread a violation? What about repeating the same question after being told where to find the answer?)

- Can members promote their own products or services? If so, under what conditions?

- What happens if someone posts sensitive personal information - like an email address or student ID?

- Are political or religious opinions allowed, or is the space strictly topic-focused?

These rules shouldn’t be buried in a PDF no one reads. Post them in a pinned message, link them in the welcome thread, and mention them every time someone crosses a line. Consistency matters more than perfection. People trust systems they can understand.

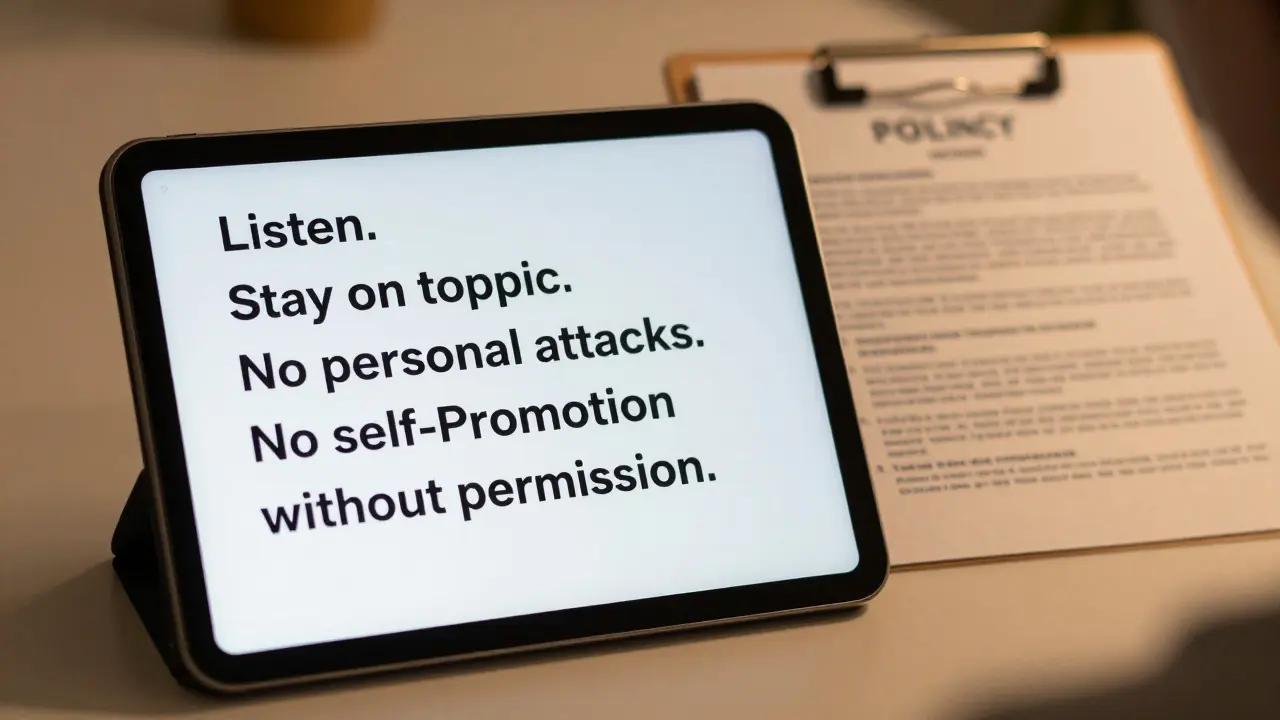

One course community in Auckland saw a 70% drop in conflict reports after they replaced their old "be respectful" rule with a 4-point code: Listen. Stay on topic. No personal attacks. No self-promotion without permission. Simple. Memorable. Enforceable.

Escalation Isn’t Punishment - It’s Protection

Not every issue needs a ban. Most problems can be resolved with a private message or a temporary timeout. But you need a clear path for when things get serious.

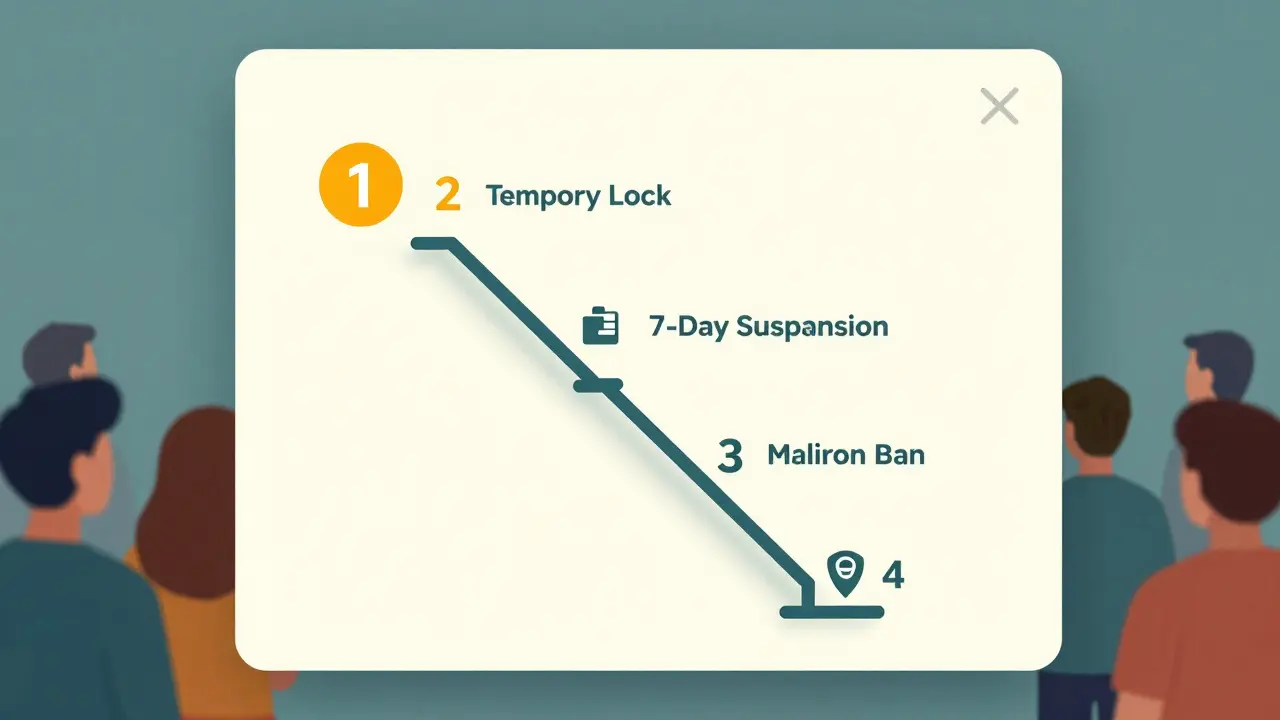

Here’s how a real escalation ladder works in practice:

- Warning (Level 1): A moderator sends a private note explaining the violation and linking to the policy. No action taken on the post or user.

- Temporary Lock (Level 2): The offending post is hidden for 24 hours. User can still view the community but can’t post. One warning per user per month is allowed.

- 7-Day Suspension (Level 3): User can’t log in or post. They receive a written explanation and an option to appeal.

- Permanent Ban (Level 4): Reserved for repeat offenders, hate speech, threats, or sharing illegal content. Requires approval from at least two moderators.

Transparency here builds trust. When users see that bans aren’t random - they’re part of a clear, fair process - they’re more likely to follow the rules. And if someone appeals? Have a form. Have a timeline. Have a response. Even if the answer is "no," people respect being treated like adults.

One university’s online writing workshop used this ladder for six months. Suspensions dropped by 65%. Why? Because users knew what was coming. They weren’t surprised. And most didn’t want to risk losing access.

Tools You Actually Need

You don’t need 15 plugins. You need three tools that do the heavy lifting.

- Automated keyword filters: Block obvious spam, slurs, or phishing links before they appear. Don’t rely on AI to catch nuance - use simple lists. Example: block "free money," "click here," "your account is locked."

- Flagging system: Let members report issues with one click. Make sure reports go directly to moderators, not buried in a general inbox. Include a checkbox: "This is harassment," "This is spam," "This is off-topic."

- Activity dashboard: Track who posts often, who gets reported, who disappears after a warning. Look for patterns. A user who posts 20 times a day but gets flagged 15 times? That’s a red flag. A user who never posts but flags 5 things a week? That’s a quiet ally.

Many platforms - like Discord, Circle, or Mighty Networks - include these tools out of the box. You don’t need to build anything. Just turn them on and set the thresholds. For example: auto-hide posts with 3+ flags within 10 minutes. That’s faster than any human can respond.

One online course for graphic designers switched from manual moderation to automated flagging + a 3-person moderation team. Their response time dropped from 18 hours to under 90 minutes. Community trust scores rose by 40% in three weeks.

Training Your Moderators

Most communities fail because they treat moderation like a volunteer side job. It’s not. It’s a skill.

Train your moderators like you’d train a teacher:

- Role-play tough conversations. Practice delivering a suspension notice without sounding angry.

- Review real examples - anonymized - of past conflicts. What worked? What backfired?

- Set expectations: "You are not here to win arguments. You are here to protect the space."

- Give them a 1-page cheat sheet: "When to escalate," "What to say in a warning," "When to escalate to admin."

And rotate the role. Don’t let one person carry the whole burden. Burnout leads to inconsistency - and inconsistency kills trust.

What Not to Do

Here are three mistakes that break communities:

- Reacting emotionally: If you’re angry, step away. A heated reply from a moderator makes the whole community feel unsafe.

- Ignoring small issues: Letting one person repeatedly interrupt discussions teaches others it’s okay to do the same.

- Changing rules mid-game: If you suddenly ban self-promotion after letting it slide for months, people will feel betrayed. Update policies openly, with notice.

One course community banned a long-time member for posting a link to their YouTube channel. They didn’t have a rule against it. The member posted a public complaint. Over 60% of the group sided with them. The community lost credibility. The rule wasn’t the problem. The surprise was.

Keep It Alive

A moderator playbook isn’t a document you write once and forget. It’s a living system. Review it every quarter. Ask members: "What’s one thing we should change?" Use anonymous feedback forms. Watch your metrics: Are reports going up? Are posts declining? Are new members leaving after their first week?

Communities thrive when people feel heard. Not because you’re popular. Not because you’re strict. But because they know what to expect - and trust that you’ll follow through.

What should I do if a member claims they were banned unfairly?

Have a formal appeal process. Ask them to submit a short written explanation. Review their activity history, the original report, and any moderator notes. Respond within 72 hours with a clear answer - even if it’s "we stand by our decision." Never leave someone hanging. Silence feels like dismissal.

Can one person moderate a large course community?

Not sustainably. Even with automation, one person will burn out in 3-6 weeks. A healthy community needs at least 3 active moderators, with 1-2 backups. Rotate shifts. Share the load. Celebrate their work. Moderation is invisible until it fails.

How do I know if my moderation policies are working?

Look at three things: 1) The number of reports per 1,000 active users - if it’s rising, something’s wrong. 2) New member retention - if people leave after their first week, they didn’t feel safe. 3) Direct feedback - ask members in a quiet poll: "Do you feel this space is fair?" If less than 70% say yes, it’s time to revise.

Should I allow anonymous reporting?

Yes - but with limits. Anonymous reports help people speak up without fear. But they can’t be the only way. Always ask for context: "What happened?" "When?" "Did anyone else see it?" Use this info to verify. If a report has no details, treat it as a heads-up, not proof.

What’s the most common mistake new moderators make?

Trying to be liked instead of being fair. Moderators often delete posts to avoid conflict, or let people off the hook because they’re "nice." That erodes trust. People don’t want a friend. They want a reliable system. Be consistent. Be calm. Be clear.

Comments

Jeremy Chick

This is solid. I run a Discord server for game devs and we used the exact 4-point code they mentioned. Conflict dropped hard. No more 'but they're just joking' nonsense. People know the line and don't cross it. Simple works.

Also, auto-hide posts with 3 flags in 10 mins? YES. We set that up last month. Cut our moderation load by 80%.

Sagar Malik

Ah yes, the neoliberal moderation industrial complex. You folks are so eager to automate human interaction into sanitized, algorithmically-sanctioned echo chambers. The 'keyword filters' are just digital thought policing disguised as 'safety.' Who defines 'harassment'? The same corporate entities that monetize outrage. This playbook is a Trojan horse for compliance culture. We must resist the quantification of empathy.

Also, 'self-promotion without permission'? What if my YouTube channel is a radical pedagogical project? The system doesn't care about intent. It only sees keywords. We are being groomed for digital serfdom.

Seraphina Nero

I love how they said 'consistency matters more than perfection.' That’s the whole thing right there. I’ve been in communities where mods were nice but inconsistent, and it felt like walking on eggshells. No one knew what was allowed. But when you know the rules and they’re applied the same way every time? It feels safe. Like, really safe. That’s everything.

Megan Ellaby

The part about rotating moderators? HUGE. I was the only mod on my book club’s forum for 9 months. Burned out hard. Started missing reports. Started replying with sarcasm. Then we brought in two others. Things got so much better. We even started having monthly check-ins just to talk about hard cases. It helped us all. Moderation shouldn’t be a solo sport.

Also, anonymous reporting with context? YES. So many people are scared to speak up. But they’ll leave details if it’s easy. We got way more useful reports after we added that.

Rahul U.

👏👏👏 This is the blueprint. Simple rules, clear escalation, tools that work. We implemented this exact structure in our coding mentorship group. Suspensions dropped 70%. Engagement went up. People started helping each other more because they felt protected. The 'quiet ally' observation? Genius. We now have a 'flagger of the month' shoutout. It’s silly but it works.

And yes - 3 mods minimum. One person can’t do this forever. Burnout is real. We rotate weekly. Celebrate the grind. 💪

E Jones

Let me tell you what they’re not telling you. This 'playbook'? It’s not about safety. It’s about control. The keyword filters? They’re trained on biased data. The flagging system? It’s a surveillance tool. The 'activity dashboard'? It’s profiling. You think you’re building trust, but you’re building a digital panopticon. Who’s behind these platforms? Big Tech. Who profits when communities get quiet? The same ones who sell your data. This isn’t moderation - it’s pre-crime. They want you to police yourselves so they don’t have to. Wake up. This is how authoritarianism soft-lands. The '4-point code'? It’s a loyalty oath. And you’re signing it with every 'be respectful' post.

Barbara & Greg

The notion that 'consistency matters more than perfection' is, frankly, a dangerous abdication of moral responsibility. Moderation is not a mechanical process. It is an ethical one. To enforce rules without nuance - without discerning intent, context, or historical precedent - is to reduce human interaction to a series of algorithmic triggers. One must ask: Who benefits from this sterile, rule-bound order? And at what cost to the soul of discourse?

Furthermore, the dismissal of emotional response as 'unprofessional' is a deeply flawed paradigm. Emotion is not the enemy of justice. It is its compass.

selma souza

The phrase 'no self-promotion without permission' is grammatically ambiguous. Is it 'self-promotion' as a noun or 'self-promotion' as a gerund? Also, 'post them in a pinned message' - should be 'post them as a pinned message.' And 'link them in the welcome thread' - 'them' refers to plural 'rules,' but 'link them' should be 'link it' if referring to the policy document as a single entity. This entire document needs copyediting. I’m surprised this was published.

Frank Piccolo

Look, I get it. You want your little online club to feel cozy. But this playbook? It’s for people who think moderation is about being nice. Real communities don’t need 4-point codes. They need one rule: no nonsense. If someone’s a troll, ban them. No warnings. No appeals. No 'let’s have a conversation.' You coddle them once, they’ll eat your whole community alive. I’ve seen it. We had a guy in our forum who posted 30 times a day, got flagged 20 times, and the mods kept giving him 'chances.' Guess what? He turned the whole thing into a cult. Now it’s dead. Don’t be soft. Be decisive.

James Boggs

Excellent framework. Clear, practical, and scalable. We adopted the escalation ladder last quarter and saw a 60% reduction in complaints within 30 days. The key was training moderators to deliver warnings with empathy, not aggression. Also, the activity dashboard is a game-changer - we now have a 'quiet ally' program where users who flag responsibly get monthly recognition. Small thing. Big impact. Thank you for this.

Addison Smart

This is the most thoughtful moderation guide I’ve seen in years. I’ve been moderating cross-cultural learning forums for over a decade, and what stands out here is the emphasis on trust over control. The Auckland case study? I’ve seen that exact thing happen in our Southeast Asian cohort. When we replaced vague guidelines with clear, simple rules - 'Listen. Stay on topic. No personal attacks. No self-promotion without permission' - it was like a weight lifted. People stopped guessing. They started engaging.

And the rotation of moderators? That’s not just smart - it’s human. Burnout is the silent killer of communities. We now have a 3-person rotation with weekly debriefs. We even share a Slack channel just to vent and celebrate wins. It’s not glamorous. But it’s sustainable.

One thing I’d add: don’t just look at reports. Look at silence. The users who stop posting after a warning? They’re not fixed - they’re gone. Your job isn’t to punish. It’s to protect the space so people feel safe enough to stay.