Imagine you are sitting in a classroom. The teacher asks a question, and you get it wrong. In a traditional setting, the teacher moves on to the next topic, hoping you catch up later. In an adaptive learning system, the software immediately detects your struggle and adjusts. But here is the real question: how does it decide what to show you next? Does it follow a strict set of pre-written instructions, or does it use artificial intelligence to guess your needs based on millions of data points?

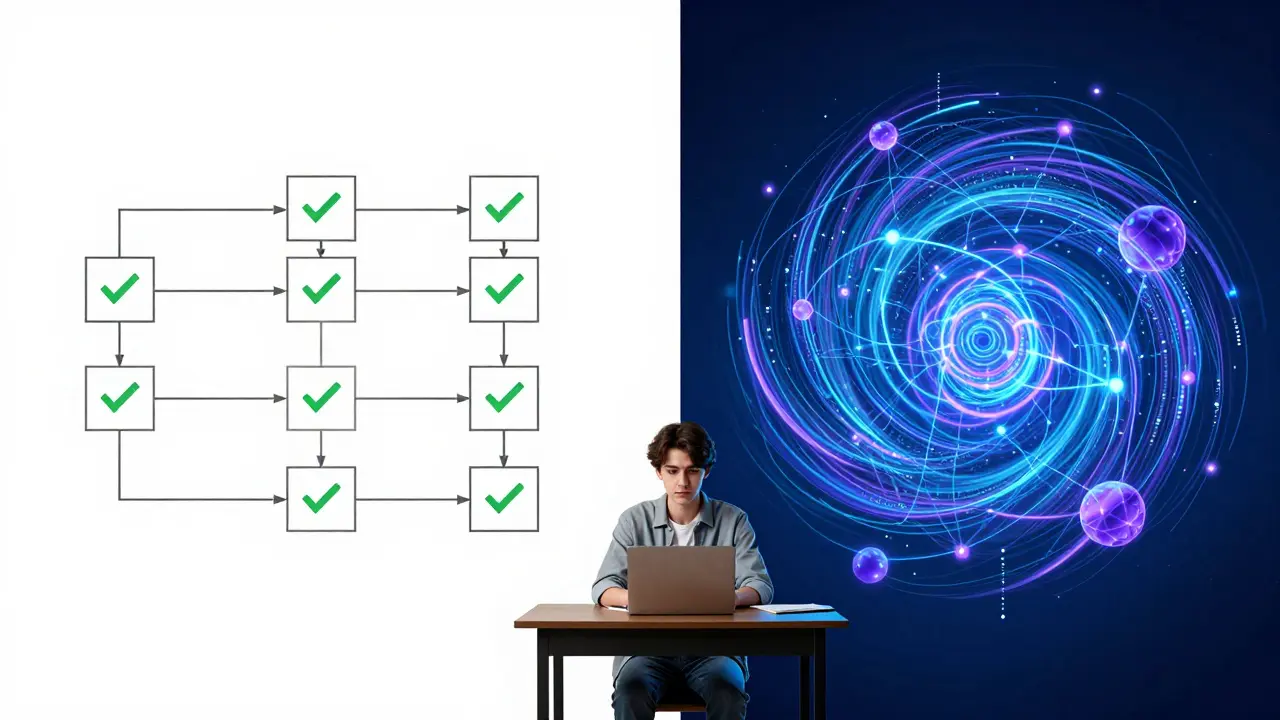

This is the core tension in modern educational technology. We have two main engines driving personalization: rules-based systems and AI-driven personalization. One is predictable and transparent; the other is dynamic and powerful but often opaque. Choosing between them isn't just a technical decision-it’s a pedagogical one that affects how students learn, trust their tools, and succeed.

The Logic of Rules-Based Personalization

Let’s start with the foundation. Rules-based personalization relies on explicit logic defined by instructional designers. It works like a flowchart. If a student answers three questions correctly about photosynthesis, they unlock the module on cellular respiration. If they miss two, the system loops them back to a review video. This approach has been around since the early days of computer-assisted instruction, evolving into sophisticated decision trees used by platforms like Knewton (in its earlier iterations) and many legacy Learning Management Systems.

The beauty of this method lies in its transparency. You know exactly why you are seeing a specific resource. There is no mystery box algorithm deciding your fate. For educators who need to align content strictly with state standards or accreditation requirements, this predictability is invaluable. If a curriculum mandates that Topic A must precede Topic B, a rules-based system enforces that sequence without deviation.

However, rigidity can be a double-edged sword. Rules-based systems struggle with nuance. They treat all errors equally. Did the student make a careless arithmetic mistake, or do they fundamentally misunderstand algebraic concepts? To a simple rule, both look like "incorrect answer." Consequently, the system might send a high-performing student back to basics simply because they had an off day, leading to frustration and disengagement. This lack of context awareness is the primary limitation of purely logical personalization.

The Power of AI-Driven Personalization

Enter artificial intelligence. AI-driven personalization uses machine learning models-often Bayesian Knowledge Tracing or deep neural networks-to analyze not just whether an answer was right or wrong, but the pattern of behavior surrounding it. How long did the student hesitate? Did they click away to search for help? What similar questions did they struggle with last week?

Platforms like ALEKS and DreamBox utilize these techniques to build a dynamic profile of each learner. The system doesn't just follow a map; it draws the map in real-time. It can identify that a student understands the concept of fractions visually but struggles with symbolic representation, suggesting a different type of practice problem rather than repeating the same format.

This adaptability leads to higher efficiency. Students spend less time reviewing what they already know and more time mastering new skills. In large-scale deployments, such as those seen in corporate training or massive open online courses (MOOCs), AI can handle the complexity of thousands of unique learning paths simultaneously. It scales personalization in a way human teachers physically cannot.

Yet, this power comes with the "black box" problem. Because the decisions are made by complex algorithms, it is often difficult to explain *why* a specific recommendation was made. For a teacher trying to intervene, knowing that the AI flagged a student as "at risk" is less helpful than knowing which specific prerequisite skill is missing. Trust becomes a significant factor when the logic is hidden.

When to Choose Rules-Based Systems

So, when should you stick to the tried-and-true rules-based approach? The answer depends heavily on your constraints and goals. Here are the scenarios where rigid logic wins:

- Compliance and Certification: If your course leads to a professional certification or legal compliance requirement (like workplace safety training), you cannot afford ambiguity. Every learner must demonstrate proficiency in specific, non-negotiable topics. Rules ensure every required node is visited.

- Small Content Libraries: AI requires data to learn. If you have a small library of content-say, 50 modules-the statistical significance of individual user interactions is low. Complex models will likely overfit or produce erratic recommendations. Simple branching logic is more reliable here.

- Budget Constraints: Developing and maintaining robust AI models requires significant computational resources and specialized data science talent. Rules-based systems are cheaper to build and host. For startups or smaller institutions, this cost difference is decisive.

- Transparency Requirements: If stakeholders demand full visibility into the learning path-for example, parents wanting to see exactly why their child is retaking a lesson-rules provide clear audit trails. AI explanations can feel vague or unconvincing.

When to Switch to AI-Driven Personalization

On the flip side, there are clear signals that you need the flexibility of AI. Consider moving toward intelligent personalization if:

- You Have Large Scale Data: Once you have hundreds or thousands of learners interacting with your content, patterns emerge that humans can’t manually code. AI thrives on this volume, identifying subtle correlations between performance metrics and success.

- Content is Non-Linear: In subjects like creative writing, strategic management, or complex engineering, there is rarely one correct path. Learners benefit from exploring connections. AI can recommend diverse resources based on cognitive style rather than forcing a linear progression.

- Engagement is Dropping: If students are churning out of your platform, rigid sequences might be the culprit. AI can detect boredom or confusion through interaction patterns and inject variety-switching from text to video, or changing difficulty levels-to maintain optimal challenge.

- Long-Term Skill Development: For lifelong learning platforms, the goal is continuous growth. AI can track progress over months or years, adjusting goals dynamically as the learner’s career or interests shift, something static rules cannot easily accommodate.

The Hybrid Approach: Best of Both Worlds

In practice, the most effective adaptive learning platforms in 2026 do not choose one side exclusively. They use a hybrid model. Think of it as a framework with a flexible interior. The outer structure-core competencies, mandatory assessments, and final outcomes-is governed by strict rules to ensure quality and compliance. Inside that framework, AI drives the daily experience.

For instance, a medical education platform might use rules to ensure every student completes a specific set of anatomy quizzes before moving to clinical simulations. However, within the quiz section, AI determines the order of questions, the type of feedback provided, and whether to offer hints based on the student’s confidence level. This combination provides the safety net of rules with the agility of AI.

Implementing this requires careful architecture. You need a content tagging system that allows AI to understand relationships between resources. Without rich metadata describing the skills, difficulty, and modality of each piece of content, AI has nothing to work with. The investment in content structuring pays off by enabling both rule-based filtering and AI-driven discovery.

| Feature | Rules-Based | AI-Driven |

|---|---|---|

| Decision Logic | Explicit, predefined IF-THEN statements | Implicit, learned from data patterns |

| Transparency | High (easy to debug) | Low (black box effect) |

| Data Requirement | Minimal | Large volumes of historical data |

| Cost to Implement | Low to Medium | High (requires ML expertise) |

| Flexibility | Rigid | Dynamic and adaptive |

| Best For | Compliance, small libraries, linear curricula | Scale, engagement, non-linear skills |

Pitfalls to Avoid in Implementation

Moving toward personalization, regardless of the method, introduces risks. One common mistake is assuming that personalization equals better learning automatically. Poorly designed rules can lead to "looping hell," where students repeat the same content endlessly without progress. Similarly, poorly tuned AI can create echo chambers, only showing students content similar to what they already know, limiting exposure to challenging material.

Another pitfall is ignoring the human element. Teachers and trainers need dashboards that translate system data into actionable insights. If the AI flags a student as struggling, the teacher needs to know *what* to do about it. Integrating these systems into existing workflows is crucial. Personalization fails if it isolates the learner from human support.

Finally, privacy concerns cannot be overlooked. AI-driven systems collect granular behavioral data. Ensuring compliance with regulations like GDPR or FERPA is essential. Users must understand how their data is used to personalize their experience, and they must have control over it. Transparency builds trust, which is the foundation of any successful educational relationship.

Is AI-driven personalization always better than rules-based?

Not necessarily. AI is better for scale, engagement, and non-linear learning paths, but it requires significant data and resources. Rules-based systems are superior for compliance, transparency, and situations with limited content or budget. The best choice depends on your specific educational goals and constraints.

How much data do I need for AI personalization to work?

While there is no hard number, AI models generally require hundreds to thousands of learner interactions to begin producing reliable recommendations. With very small datasets, simpler rule-based approaches are often more accurate and stable.

Can I combine both methods in one platform?

Yes, and many leading platforms do. A hybrid approach uses rules to enforce critical milestones and compliance requirements, while AI handles the day-to-day sequencing and resource recommendations within those boundaries.

What are the biggest risks of using AI in education?

The main risks include the "black box" problem (lack of transparency in decision-making), potential bias in algorithms, privacy concerns regarding student data, and the possibility of creating echo chambers that limit intellectual diversity.

Which industries benefit most from AI-driven personalization?

Industries with large, diverse workforces and complex skill sets, such as healthcare, technology, and finance, benefit greatly. Corporate training programs that need to scale personalized development across thousands of employees find AI particularly valuable.

Comments

Jess Ciro

you think this is just about education? nah. its a surveillance state in disguise. every click tracked every hesitation measured they want to know you better than you know yourself so they can sell you the perfect lie at the perfect time. wake up sheeple

saravana kumar

the author clearly has never actually built an LMS from scratch or even touched a production database with more than a few thousand rows. rules based systems are not rigid they are deterministic and that is what engineers prefer because it means we can predict failure modes. ai driven personalization sounds fancy until you realize it requires a data science team of fifty people just to keep the model from drifting into nonsense after two weeks of new user behavior patterns. also the claim that ai scales personalization is laughable because most schools do not have the bandwidth to support real-time inference for thousands of students simultaneously without crashing their servers during peak hours.

Tamil selvan

I completely agree with the sentiment expressed regarding the need for transparency; however, I must gently point out that the hybrid approach mentioned is indeed the most prudent path forward. It is very important to consider that educators require clear metrics to assess student progress effectively. When we implement AI tools, we must ensure that they augment rather than replace human intuition. The emotional connection between teacher and student cannot be replicated by algorithms no matter how sophisticated they become. Therefore, maintaining a balance is crucial for holistic development.

Mark Brantner

lol yeah right like any school actually has the budget for this stuff. my district cant even afford working projectors let alone some fancy ai black box that decides if im smart enough to pass math. sounds like another tech bro solution looking for a problem. but sure keep selling us on the dream while our teachers burn out trying to manage twenty different platforms that dont talk to each other.

Kate Tran

i mean its nice to see someone finally admit that rules arent always bad. usually these articles act like ai is magic dust that fixes everything instantly. but yeah privacy is a huge deal here. i dont trust companies with my kids data period. especially when they say they use it for personalization which sounds like a polite way of saying targeted advertising.

amber hopman

actually i think the comparison table oversimplifies the cost aspect significantly. implementing robust rule engines is surprisingly expensive when you factor in the instructional design hours needed to map out every possible branch. whereas once an ai model is trained it can scale with marginal costs. however i concede that the initial data requirement is a massive barrier to entry for smaller institutions. perhaps a middle ground would be using lightweight machine learning models that require less data than deep neural networks?

Jim Sonntag

hey look at all these experts arguing over who gets to control the learning path. meanwhile the students are just sitting there waiting for someone to tell them what to do. maybe instead of choosing between rules and ai we should focus on teaching critical thinking skills so kids can navigate information themselves regardless of the platform. but whatever lets keep building walled gardens and calling it innovation.

Deepak Sungra

oh great another article telling me how my job is going to be replaced by a computer that doesnt understand context. i spend half my day fighting with the lms anyway why add another layer of complexity that breaks every time someone updates the software. and dont get me started on the black box issue. when a student fails i need to know why not some vague algorithmic score that tells me nothing useful. its exhausting dealing with tools that pretend to help but only create more work.

Samar Omar

one must acknowledge the profound philosophical implications of delegating pedagogical decisions to opaque algorithms, for such a shift fundamentally alters the nature of knowledge acquisition itself, transforming it from a human-centric endeavor into a mechanistic process optimized for efficiency rather than enlightenment, thereby risking the erosion of intellectual autonomy and the subtle art of serendipitous discovery that often leads to true understanding, which is precisely why the pretentious elite who champion these technologies fail to grasp the deeper cultural degradation occurring beneath the surface of their sleek interfaces and polished dashboards.